Part of file management is moving files onto storage where they are best suited. Within Spectrum Scale we call these storage types "pools" and moving "migrating", as this is done online, while the file stays in its directory. You can copy a file in which case the placement rules are applied again. With the earlier examples of placement policies, you can place a file based on file type, but not on file size, as that is not known yet when the file is created. Also, if a file is not used for a period, you may want to migrate it to a slow pool, or, if it is really busy, to a fast pool. Or compress it, or even delete it. Migration policies allow for all this.

These policies are SQL statements and can be stored in a separate file or in the filesystem data itself. We'll use the latter method as this is easy and the default when using the GUI. In the GUI, go to the Files icon on the left-hand side, then "Information Lifecycle, and click on "Add Rule".

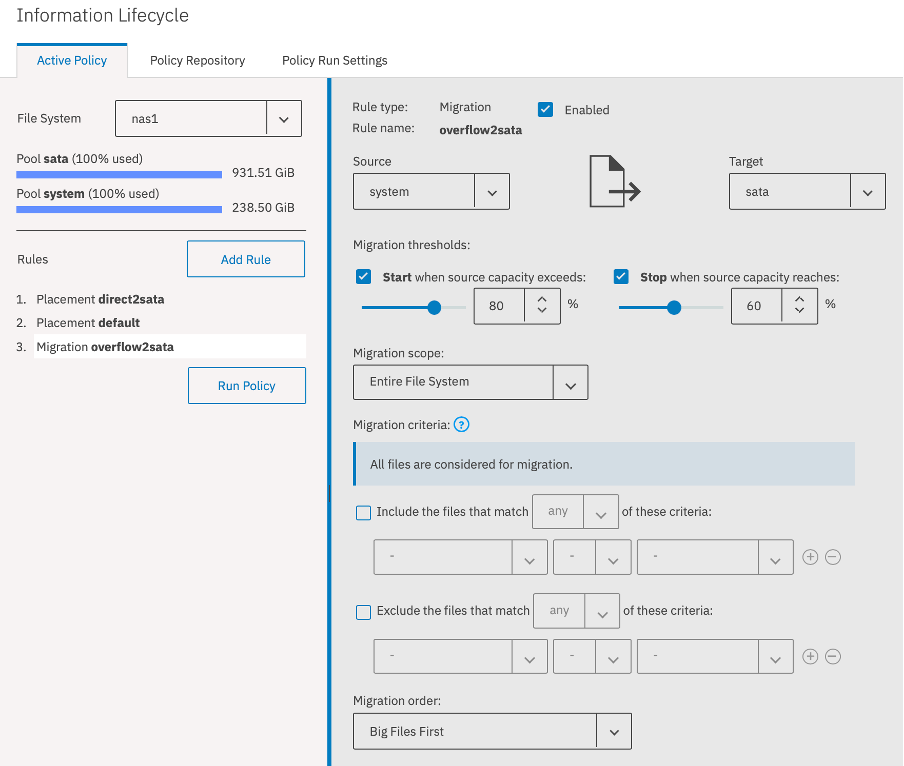

We'll create a migration policy that will move data from the system pool to the sata pool when the SSD is becoming full. Make up a nice name and select the "Migration" rule type. Set the following options:

- Set the source pool to system, and the target pool to sata.

- Set the migration threshold to start at 80% full, and stop at 60%

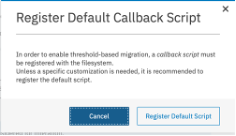

- You will see a pop-up that will ask you to enable a callback script. Spectrum Scale uses these scripts to react to events, like filesystem (almost) full. Register the script, you only need to do this once.

- Migration order: "Big files first", so we're done quicker.

Click "Apply" in the left-hand pane, the rules are now saved.

You can see the order of the rules on the left suggests that placement rules are checked before migration rules, which is sort of true, but remember, migration rules are not "active", they need to be triggered by a callback, run manually, or scheduled using crontab. The rules stored in the filesystem itself are intended to only be rules that are triggered automatically. Rules that need manual activation should be stored in a separate repository which can be done in the "Policy Repository" tab.

For simplicity, we will ignore the separate repositories, and dump all rules in the "Active Repository".

Let's add a compression rule for some file types. Add a rule, specify "Compression" as a file type. Set the following options:

- Set the pool to sata

- Select "Include the files that match any of these criteria:"

- Extension IN .tar

- Extension IN .iso

Drag the rule to be above the overflow2sata rule and "Apply Changes".

Compressing files makes better use of the available disk space. Regretfully, deduplication is not available within the GPFS file system, that would have been nice. So next is to move unused data from the SSD to the sata disk, and hopefully we do not need the overflow rule at all. Let's create a rule that moves cold data, but only when it is a certain minimum size. Small files belong on SSD.

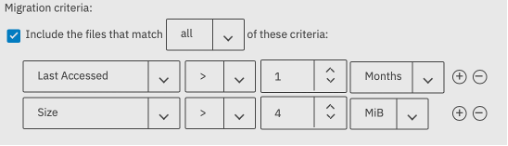

Add a migration rule, and set the following options:

- From pool system to pool sata

- Include the files that match all of these criteria:

- last access over one month ago

- size larger than 4 MiB

Drag the rule to above the compression rule and click "Apply Changes". Ignore the warning, this rule will be triggered by a scheduled run.

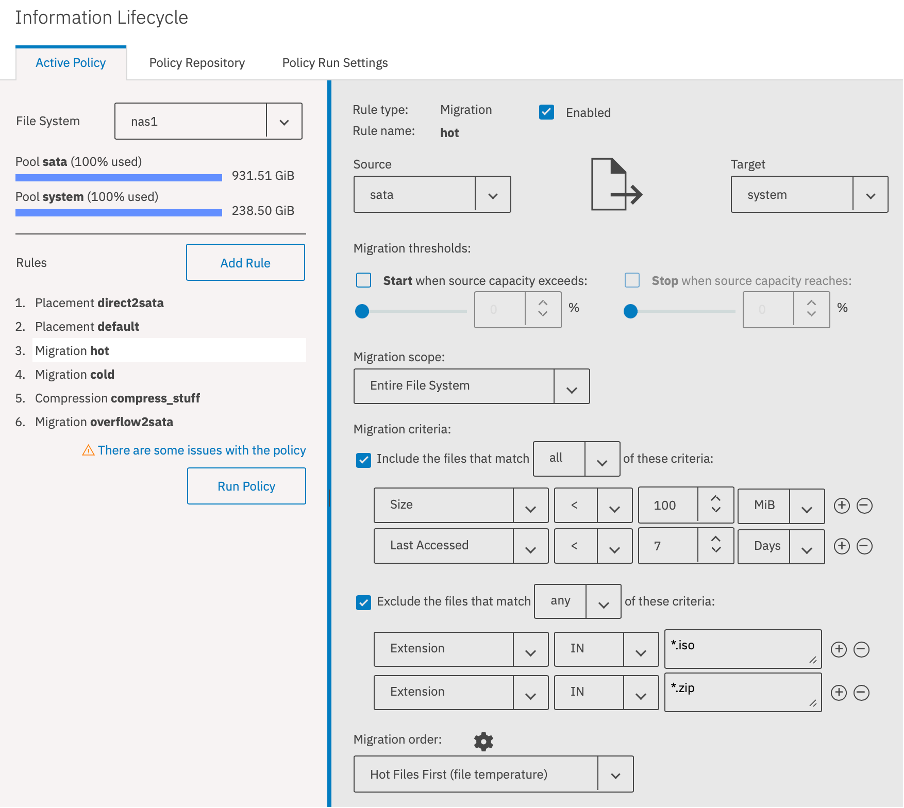

Last rule we'll add for now is to move hot data back to SSD. Every time a file is accessed a heat counter is increased, and over time it decreases. This mechanism can be used as an auto tiering mechanism, but with more control over what type of files go where. Create a new migration rule and select the following options:

- From pool sata to pool system

- Include files that match all of these criteria:

- size is smaller than 100 MiB

- Last accessed less than 7 days

- Exclude files that match any of these criteria:

- Extension IN .iso

- Extension IN .zip

- Migration order: Hot files first

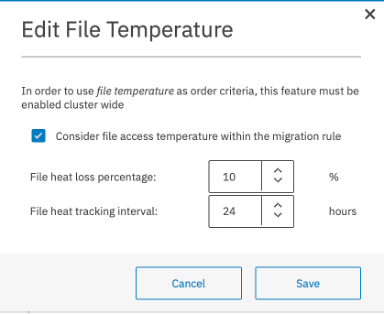

- You'll get a pop-up to enable the file temperature mechanism:

- Select the option button, and click save.

Move the rule to the top position and Apply the Changes. It is important to limit this rule to small and recent files only (<7 days access) otherwise it will cause the system pool to fill up.

You can now start the policies by pressing "Run Policy", it will run in the background. It would be a good idea to schedule this policy to run daily. The GUI does not provide an option for this, so you'll have to run it manually from commandline:

mmapplypolicy nas1

You can put this command (with full pathname!) into /etc/cron.daily like so:

# cat > /etc/cron.daily/gpfs-mmapplypolicy << EOF

/usr/lpp/mmfs/bin/mmapplypolicy nas1 -L 0

EOF

Let's test this out. We'll create a test ISO file of 101 MiB, so the placement rule puts it in the sata pool and the migration rule for compression will compress it.

# head -c 101M /dev/zero > /nas1/test2.iso

# du -sm /nas1/test2.iso

101 /nas1/test2.iso

# mmlsattr -L /nas1/test2.iso

...

storage pool name: sata

Misc attributes: ARCHIVE

...

# mmapplypolicy nas1 -L 6

...

<2> /nas1/test2.iso [2021-05-17@11:33:09 0 0 105906176 sata 2021-05-17@11:33:08 12288 root] RULE 'compress_stuff' MIGRATE FROM POOL 'sata' COMPRESS('yes') WEIGHT(inf)

...

2 1 12288 0 0 0 RULE 'compress_stuff' MIGRATE FROM POOL 'sata' COMPRESS('yes')

...

# mmlsattr -L /nas1/test2.iso

...

storage pool name: sata

...

Misc attributes: ARCHIVE COMPRESSION (library z)

...

# du -sm /nas1/test2.iso

12 /nas1/test2.iso

Note that the file stays in the sata pool, even though the "hot" rule could move it back to the system pool. We prevented this in two ways, we exclude the ISO filename extension, and we set a maximum file "Size" of 100 MiB. We could have set a maximum "Allocated Space" instead, and that would be different.

Plenty of opportunity to fine tune the placement of your files, and even more opportunities to make a mess. Be careful out there!

Now we have the infrastructure done, in the next blog we'll save our files from bad actors by using snapshots: Part 5: Creating snapshots to safeguard against ransomware attacks

#Highlights-home#Highlights