PowerVM Virtual Fibre Channel(vfc)/NPIV stack enabled multiple queue support on Power 9 machines with VIOS version 3.1.2 and AIX version 7.2 TL5 and later.

- Motivation

Last 5-6 years, the SSD and all-flash arrays in the market support more than a million IOs per seconds (IOPS) with sub-millisecond latencies and modern host based adapters (HBAs) also added high IOPS capabilities. The host side storage software stacks built prior to flash storage were good enough for the long latency non-flash storages where IO response time is in the order of milliseconds. But the fast storage devices started exposing bottlenecks in the software stack. Hence storage a major restructuring was required of our fibre channel storage device driver stacks. The storage software stacks were changed to add multiple queues so that the applications can push more IOs in parallel. This also gave an opportunity to fix inefficiencies in the code like lock contention, poor affinity etc.

This paper explains the work done in the PowerVM NPIV virtual stack to improve IO throughput by adding multiple queues.

- NPIV Overview

N_Port ID Virtualization(NPIV) is a Fibre Channel industry standard technology that provides the capability to take a physical Fibre Channel HBA port and assign multiple unique worldwide port names (WWPNs). The worldwide port names can then be assigned to multiple initiators such as Operating Systems. Thus, NPIV allows a physical N_Port to be logically partitioned into multiple logical ports/FC addresses so that a physical HBA port can support multiple initiators, each with a unique N_Port ID.

In PowerVM, NPIV enables Virtual I/O Server (VIOS) to provision an entire logical port to a client LPAR rather than individual LUNs. Client partitions with this type of logical port operate as though it has its own dedicated FCP adapter(s). PowerVM NPIV supports AIX, Linux, IBM i and open firmware (boot) clients. A single physical adapter port can be mapped to up to 255 one or more client’s virtual adapter. The VIOS acts as a pass-through and does not see any disks which makes it easy for administrator to manage the VIOS. Client partitions see the Disks/LUNs coming from different storages with all the attributes.

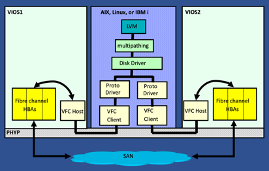

Prior to VIOS 3.1.2 & AIX 7.2 TL5, the NPIV stack supported a single queue between client (AIX/Linux/IBM i) and VIOS because the physical Fibre Channel driver only supported a single physical queue. The first figure shows the typical dual VIOS configuration. In the middle there is a client partition which has typical storage IO stack and VIOSes on both sides. The messages between client partition and VIOS is passed through the Power Hypervisor (PHYP) command/response queue. So there was single queue between client partition and VIOS and on the VIOS, between virtual driver to the physical adapter.

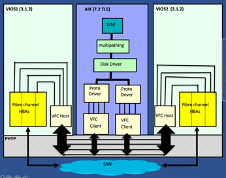

The following figure shows the newly added support for end to end multiple queue. AIX FC driver enabled multiple work queues in AIX protocol and adapter drivers. The client and VIOS partitions multiple subordinate queues, one per IO queue.

- Requirements for Multiple queue support:

The following table explains the requirement to support end to end multiple queues in the PowerVM NPIV stack for AIX client. If all hardware, firmware version, VIOS version and AIX client version requirements are satisfied then the queues are turned on by default. The number of queues are decided based on the different adapter attributes and resources available.

|

|

Supported versions

|

|

Hardware

|

POWER9™ processor-based systems or later

|

|

Power Firmware

|

Version 940 or later. Note: 950 or later is recommended

|

|

Fibre Channel (FC) adapter

|

Fibre Channel 16Gb or 32Gb supported adapter that supports Multiple-Queue feature.

|

|

VIOS

|

Version 3.1.2, or later

|

|

AIX

|

Version 7.2 Technology Level 05, or later.

|

|

Linux® Enterprise Server (SUSE, Red Hat®)

|

Multiple queue support will be added in future releases

|

|

IBM i client

|

IBM i 7.4 Technology Refresh 6 and IBM i 7.3 Technology Refresh 12

|

- Immediate gains from new multi-queue stack

- Increase in IOPS: Client partition is now capable of pushing lot more IOs in parallel.

- Less number of virtual adapters needed: Traditionally customer added multiple virtual adapters to push more IOs in parallel but now single initiator port is far more capable to push more IOs due to multiple queues so you may not need multiple virtual adapters.

- IO processing in process context: The IO processing is moved to process context from interrupt context hence we have better control over how many IOs can be processed before requesting to send next interrupt. This reduces interrupt overheads and improves the CPU utilization. This also reduces CPU locking done by interrupt if it had to process multiple IOs. The threads are freely migratable to different CPUs. This improves overall system performance.

- Important Implementation details

-

- The NPIV storage stack is enhanced to process IOs at the process context instead of interrupt context. There is one thread per IO queue.

- Power Hypervisor now supports subordinate queues (SUB-Q) for the main command response queue. Each SUB-Q has its own command and response queue and there is an interrupt associated with it. We added a SUB-Q per IO queue to send and receive IO commands between client and VIOS partition.

- All drivers (AIX client protocol, Virtual Fibre Channel (VFC), VIOS vfc_host and physical FC) in the NPIV stack are enhanced to use multiple queues.

- Multiple changes were done while keeping performance in mind like minimal locking in the IO path, no locking while tracing, lock per IO queue instead of per target port, per queue free buffer pool to reduce global queue locking, pinning the thread stack etc.

- Frequently Asked Questions (FAQ)

- Is there user setup / tuning required for multiple queue?

By default (if all criteria met) and in most cases, no change in tuning required for optimal operation. Please refer to section 7 for additional details.

-

- How are the end to end IO queues selected?

The number of IO queues that the NPIV client uses depends on several factors such as FC adapter, FW level, VIOS level, and tunable attributes of the VFC host adapter. During the initial configuration, the client (VFC) negotiates the number of queues with the VIOS (VFC host adapter) and configures the minimum value of num_io_queues attribute at FC physical adapter and the number of queues that are reported by the VFC host.

After the initial configuration, the negotiated number is the maximum number of queues that the VFC client can enable. If the VFC client renegotiates more queues after operations such as remap, VIOS restart, and so on, the number of queues remains the same as the initially negotiated number. However, if the VFC host renegotiates with fewer queues, the VFC client reduces its configured queues to this new lower number.

For example, if the initial negotiated number of queues between the VFC client and VFC host is 8, and later if the VFC host renegotiates the number of queues as 16, the VFC client continues to run with 8 queues. If the VFC host renegotiates the number of queues as 4, the VFC client adjusts its number of configured queues to 4. However, if the VFC host renegotiates the number of queues as 8, which would result in increasing the number of configured queues to 8. The VFC client must be reconfigured to renegotiate the number of queues from the client side.

-

- How do we know that multiple queues are enabled and working?

The /proc file system on VIOS has information about number of queues actively used between client and VIOS. In the following example “Number of SUBQ channels” shows the number of active end to end IO queues. Replace vfchost0 with your vfchost name.

# cat /proc/sys/adapter/vfc/vfchost0/stats | head -3

Number of CMD queues = 1

Number of SUBQ channels = 8

NOTE: You need to be root to access the statistics from /proc filesystem.

-

- How do I know the statistics of each queue?

On VIOS partition, you can check the statistics stored in the /proc filesystem. The below example shows the statistics for Virtual Fibre Channel adapter vfchost0. You can replace the vfchost0 with your vfchost name.

# cat /proc/sys/adapter/vfc/vfchost0/stats | grep "Commands received"

Commands received = 0

Commands received = 8692844

Commands received = 10236718

Commands received = 11848144

Commands received = 13025270

Commands received = 11541206

Commands received = 10606106

Commands received = 6627340

Commands received = 8881815

NOTE: You need to be root to access the statistics from proc filesystem.

-

- How to check IO statistics on AIX client?

- You can use fcstat to find the statistics at the virtual FC adapter level.

# fcstat fcs0

FIBRE CHANNEL STATISTICS REPORT: fcs0

Seconds Since Last Reset: 1125567

FC SCSI Adapter Driver Information

No DMA Resource Count: 0

No Adapter Elements Count: 0

No Command Resource Count: 0

FC SCSI Traffic Statistics

Input Requests: 175045

Output Requests: 166363

Control Requests: 1103

Input Bytes: 654498534

Output Bytes: 657114112

-

-

- You can also use iostat command to check statistics at the device level.

-

- Do we need more CPU resources on VIOS?

Performance testing showed that CPU utilization per IO is improved because overall IO stack is much more efficient. The new NPIV stack is now capable of pushing lot more IO commands hence CPU utilization may go up based on number of IOs pushed by the client partition.

NOTE: After upgrading from single queue stack to multi-queue stack, client will start sending more IOs hence you need to monitor CPU and memory resources on VIOS and add more resources if required.

-

- Do we need more memory resources on VIOS?

The multiple queue support adds a small amount of memory requirement on VIOS. The new data structures are added per queues and threads. To maintain IO statistics, the size of command structure is increased. The extra commands are added per queue to process some IOs without grabbing any locks. IO Direct Memory Access (DMA) size is increased to support more parallel IOs.

-

- Some IO threads are showing higher CPU utilization as compared to prior VIOS releases?

We changed the model for how we process IOs in our IO stack. We are doing more processing at the process context and less at the interrupt context as a result you may see higher CPU utilization on some kernel IO processes. The CPU utilization at the interrupt context is generally counted under overall system utilization and not under any specific thread. Also newer stack got rid of inefficiencies like locking hence client partition may be able to push more IOs and hence more CPU resources could be utilized.

-

- Why is there no performance gain for the throughput less than 150K IOPs?

If application is pushing low number of IOs that means each queue will have fewer IOs. The performance testing showed that thread processing doesn’t show any gain if the number of IOs in a queue are less than 6. In such cases it is better to reduce number of end to end queues.

Note: We will try to improve on this in future releases.

-

- How do I disable end to end queues for specific virtual adapter?

- To disable queues between client and VIOS, set vfchost adapter limit_intr to true. You need to reboot the VIOS.

- To reduce number of physical queues, set FC physical adapter num_io_queues to smaller value. You need to reboot the VIOS.

-

- If I set all multiple queue related attributes to max, will that improve the performance?

Testing showed that increasing number of queues to 16 did not improve the performance. But if single physical adapter port is shared across multiple client partitions, then you should bump up the num_io_queues at the physical adapter level to maximum. The defaults are chosen based on the performance run.

- Multi-queue related configuration Attributes

The default attribute values are decided based on the performance data collected in the lab. We think that customers may not need to change these attributes but we are including description of them here just in case you want to change them.

VIOS VFC host driver supports two levels of tunning.

-

- VIOS partition level tunning using pseudo device viosnpiv0. It applies to all the adapters on the VIOS. If num_per_range attribute at the VFC host adapter level does not exist or is set to 0 then value from the viosnpiv0 will be inherited. This makes it easy to tune attributes for all adapters by setting a single attribute.

- VFC host driver level tunning: If a specific adapter needs special tunning, then adapter level attributes can be tuned. The vfchost attribute value overrides the global value set at the viosnpiv0.

Note: Please refer documentation to determine if changing these attributes requires a reboot.

The attributes affecting multiple queues are mentioned below.

|

Attribute

|

Min

Value

|

Max

Value

|

Default Value

|

Description/Guidance

|

|

Fibre Channel Adapter Attributes

|

|

num_io_queues

|

1

|

16

|

8

|

This attribute determines the number of I/O queues used in SCSI I/O communication. You should increase this value to maximum if multiple virtual adapters are using this physical adapter port.

|

|

Viosnpiv0 : Partition pseudo device

|

|

num_per_range

|

4

|

64

|

8

|

This determines the number of SCSI I/O queues for the adapter whose adapter level attribute is set to 0. We think that default is appropriate but if you think that physical ports still have some bandwidth and client partition can push more IOs then you can increase the number of queues. You may also need to increase client virtual adapter attribute and physical adapter attribute to similar value.

|

|

num_local_cmds

|

1

|

64

|

5

|

Number of free commands per thread, can be accessed by thread without lock. You can increase this value if statistics shows that number of commands allocated from global queue is very high. NOTE: VIOS will consume more memory if the command count is increased.

|

|

bufs_per_cmd

|

1

|

64

|

10

|

Number of buffer structures allocated per command. This value is useful for the large IOs with scatter gather list. This value can be tuned by checking the statistics related to global buffer allocation. NOTE: VIOS will consume more memory if the buffer count is increased.

|

|

Virtual Fibre Channel Host Adapter (vfchostx)

|

|

num_per_range

|

0

|

64

|

0

|

This determines the number of SCSI I/O queues for the VFC adapter. 0 means value is taken from the global viosnpiv0 attribute. Refer to the explanation about num_per_range in global attribute. You can change the value at the vfchost level if you want it to affect only a specific adapter.

|

|

limit_intr

|

True/

False

|

True/

False

|

False

|

Overrides number of queues setting by other attributes and only allow single queue. This helps to reduce number of interrupt sources. You can use this method to force use of single channel for an adapter.

|

-

- Run ioscli command “lsdev -dev fcs<n> -attr” to display physical adapter attributes

- Run ioscli command “lsdev -dev viosnpiv0 -attr” to display VIOS partition level attributes using the pseudo device vionpiv0

-

- Run ioscli command “lsdev -dev vfchost<n> -attr” to display VIOS VFC host attributes

- AIX client side attributes

VFC client tunable that user can modify with Min, Max and default values are explained in the table below. Run “lsattr -El fcs<n> to display virtual adapter attributes on an AIX client.

|

Attribute

|

Min value

|

Max value

|

Default value

|

Description

|

|

num_cmd_elems

|

512

|

2048

|

1024

|

This attribute determines maximum number of active I/O operations at any given point of time.

|

|

num_io_queues

|

1

|

16

|

8

|

This attribute determines the number of I/O queues used in SCSI I/O Communication

|

|

num_sp_cmd_elem

|

512

|

2048

|

512

|

This attribute determines maximum number of special command operations at any given point of time.

|

- Live Partition Mobility(LPM) support

LPM is the most important feature in PowerVM which provides the ability to migrate client logical partition from one system to another.

End to end NPIV Multi-queue requires support from the FC Adapter, SCSI proto driver, VFC Client Driver, VFC Host driver and PHYP. NPIV Multi-queue enablement at the destination during LPM is dependent on all these components. If any one component from this group is at older level, Multi Queue support will not be enabled after the LPM. AIX client allows moving from multi-queue supported source environment to single queue supported destination. It also supports multi-queue when client is moved from single queue supported environment to multi-queue supported environment.

Number of supported queues after LPM under different circumstances are explained in table below.

|

Sl No

|

Client Partition

|

Source VIOS

|

No. of configured Queues

|

Source FW Version

|

Destination VIOS

|

No. of supported Queues

|

Destination FW Version

|

No of configured queues after LPM

|

|

1

|

Multi-Q

|

Multi-Q

|

8

|

>= 940

|

Multi-Q

|

8

|

>= 940

|

8

|

|

2

|

Multi-Q

|

Multi-Q

|

8

|

>= 940

|

Multi-Q

|

16

|

>= 940

|

8

|

|

3

|

Multi-Q

|

Multi-Q

|

16

|

>= 940

|

Multi-Q

|

8

|

>= 940

|

8

|

|

4

|

Multi-Q

|

Multi-Q

|

1

|

>= 940

|

Multi-Q

|

1

|

>= 940

|

1

|

|

5

|

Multi-Q

|

Multi-Q

|

8

|

>= 940

|

Single-Q

|

1

|

>= 940

|

1

|

|

6

|

Multi-Q

|

Multi-Q

|

1

|

>= 940

|

Single-Q

|

1

|

>= 940

|

1

|

|

7

|

Multi-Q

|

Multi-Q

|

8

|

>= 940

|

Multi-Q

|

8/16

|

< 940, P8, P7

|

1

|

|

8

|

Multi-Q

|

Multi-Q

|

1

|

< 930, P8, P7

|

Multi-Q

|

8/16

|

>= 940

|

1

|

|

9

|

Multi-Q

|

Multi-Q

|

1

|

=930

|

Multi-Q

|

8/16

|

>= 940

|

8/16

|

|

10

|

Multi-Q

|

Single-Q

|

1

|

>= 940

|

Multi-Q

|

8/16

|

>= 940

|

8/16

|

|

11

|

Single-Q

|

Multi-Q

|

1

|

>= 940

|

Multi-Q

|

8/16

|

>= 940

|

1

|

|

12

|

Single-Q

|

Single-Q

|

1

|

>= 940

|

Multi-Q

|

8/16

|

>= 940

|

1

|

LPM Scenarios with NPIV-Multi-Queue for AIX client

- LPAR with 8 NPIV Queues Migrating to destination VIOS with 8/16 queues

Both sides are multiple queues aware. LPAR will continue to run with 8 queues after the migration. LPAR will continue to use 8 queues on destination even if destination VIOS supports 16 queues.

- LPAR with 16 queues Migrating to destination VIOS which supports 8 queues

If a smaller number of queues are supported at the destination compared to the source, after the LPM, LPAR will settle for minimum number of supported queues at both VFC client and VFC Host after the migration. In this case, LPAR would be configured with 8 queues after the migration.

- LPAR with 8 Queues Migrating to single queue destination VIOS

Since the VFC host is not Multi-Queue enabled at the destination, after the LPM, LPAR will be running in a Single-Queue mode. This migration may have impact on the IO performance depending on the type of IO traffic. Once the LPAR gets converted from Multi-Queue to Single-Queue after migrating from Multi-Queue aware VIOS (Version 3.1.2 or later) to Single Queue VIOS (Version 3.1.x or older version), multi-queue cannot re-enable without re-configuring the adapter. Hence, migrating the same LPAR back to P9 compatible mode with Multi-Queue aware VIOS would continue to run in a Single-queue mode. User must explicitly remove and reconfigure the VFC client adapter or reboot the LPAR to re-enable NPIV Multi-Queues.

- Single queue aware LPAR Migrating to multi-queue aware destination VIOS

When an LPAR is migrated from Single-Queue (legacy) configuration to Multi-Queue aware setup, it will continue to run in Legacy mode (Single-Queue) mode. If user wishes to enable NPIV Multi-Queue after upgrading the LPAR to Multi-Queue supported hardware and Software versions, it’s mandatory to re-configure the VFC Client adapter or restart the LPAR.

- LPAR with NPIV 8 Queues Migrating to Power 7, 8 or 9 with FW level less than 930

When a LPAR is migrated from Multi-queue aware setup to the destination with FW level less than 930 (or Early versions of Power9, Power 8, Power 7), after the LPM, LPAR will be running in a Single-Queue mode. This migration may have impact on the IO performance depending on the type of IO traffic.

Once the LPAR gets converted from Multi-Queue to Single-Queue after migrating from Multi-Queue aware VIOS (Version 3.1.2 or later) to Single Queue VIOS (Version 3.1.x or older version), multi-queue cannot re-enable without re-configuring the adapter. Hence, migrating the same LPAR back to P9 compatible mode with Multi-Queue aware VIOS would continue to run in a Single-queue mode. User must explicitly remove and reconfigure the VFC client adapter or reboot the LPAR to re-enable NPIV Multi-Queues. .

- Multi-Q LPAR Migrating from multi-queue unaware setup to multi-queue aware destination setup

When a Multi-Queue supported LPAR (AIX 7.2, TL-05 or later) is migrated from Single-Queue setup (legacy) to Multi-Queue setup, if this migration is happening first time from P8 to P9 environment, Multi-Queue will be enabled during the LPM.

- Performance results

The following performance measurements were taken using the FIO tool running RAW IO. Performance varies by the application and configuration.

- FIO (Flexible I/O) test tool: FIO is a synthetic workload generator that simulates different IO patterns. It is an industry standard tool. It provides control to change queue depth, number of concurrent jobs, length and spatial locality (random/sequential/etc.) of the IOs.

- Hardware:

- Server: IBM Power System E980 (9080-M9S)

- HBA: 2 X 2 port 16Gb FC adapter cards (Total 4 port connected to SAN)

- Storage: IBM FlashSystem 900 with 12 modules

-

- LUNs: 32 X 100GB (80% Capacity)

- FC Switch: IBM 8960-N64 Model F64

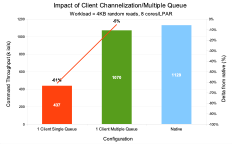

- Comparison with Native Stack: As shown in the next figure, throughput is compared between native (physical) mode, NPIV stack with single queue and stack with multiple queues. The throughput gap between Native stack and NPIV stack reduced by 56%. It also shows major throughput boost with multiple queues over single queue.

- Response time improvements: This chart compares the variation in the response time when throughput is increased. The red line shows the performance with single queue and green line shows multiple queue. The gap between the lines shows the significant reduction in IO response time and ability to scale up throughput with less impact on the response time that multiple queue can provide.

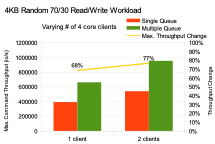

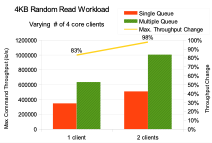

- IOPS improvement: The following chart shows how throughput varies with increase in the number of clients. This is a simulation of throughput scaling for an OLTP type workload. The orange solid bars show the throughput with single queue and green hashed bars show the throughput with end to end multiple queues. The throughput improvement with a single client is around 68%. 2 clients is around 77%.

- Write Maximum Throughput: This chart shows the throughput scaling for the 100% 4KB random writes. You can see that with few clients the improvement is around 34%. If more clients are added that request IOs at similar rates, the gain in throughput due to multiple queue will diminish as the throughput limit of the backend storage server is approached.

- Read Maximum Throughput: This chart shows the throughput scaling for 100% 4KB random reads. You can see that with a single client the improvement is around 83%, 2 clients 98%.

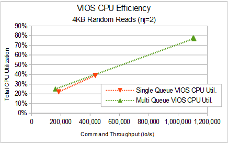

- VIOS CPU efficiency: At lower queue depths, where less CPU is consumed, multiple queue does consume more CPU per IO than single queue. But as queue depth, throughput and thus CPU usage increase, multiple queue CPU usage per IO drops relative to single queue. This CPU efficiency gain allows the multiple queue design to support appreciably higher throughputs than single queue, while using appreciably less CPU per IO at those higher throughputs. The CPU utilization curve for multiple queue is flatter than single queue, but it’s hard to fully see because single queue cannot provide the higher IOPs that multiple queue can.

These observations hold true if no other resource limits throughput. For example, if many client partitions running concurrently demand more throughput than the storage server can supply, multiple queue will not provide for any more total throughput.

- Summary

The new end to end multi-queue NPIV stack showed around 1.8x improvement in IO throughput for some workloads. It reduced the throughput gap between native mode and NPIV mode.

CPU efficiency improves as throughput increases with multiple queue than without.

PowerVM is now capable of pushing a lot more IOs in the NPIV stack.

- References

- Infocenter document regarding multiple queue support https://www.ibm.com/docs/en/power9?topic=channel-npiv-multiple-queue-support

Contacting the PowerVM Team

Have questions for the PowerVM team or want to learn more? Follow our discussion group on LinkedIn IBM PowerVM or IBM Community Discussions

#PowerVM