Streams Console has useful features that help you track down errors and identify performance problems such as bottlenecks and memory leaks. This post will cover some of these features:

Finding errors

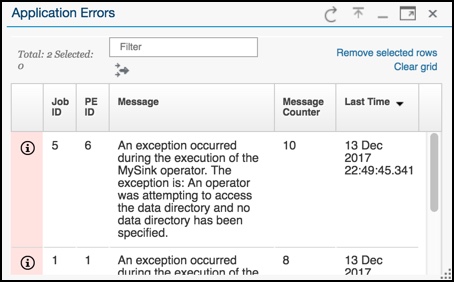

You can use the console’s new Application Errors card in the Application Dashboard to quickly see the errors. The Application Errors card shows a synopsis and a sortable list of the messages.

Application Errors card

Select the application monitoring alerts that are important to you

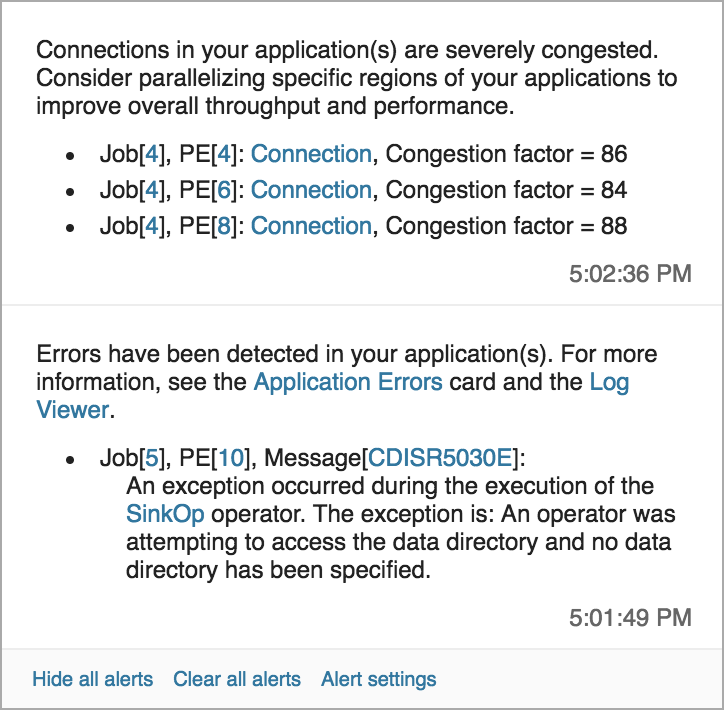

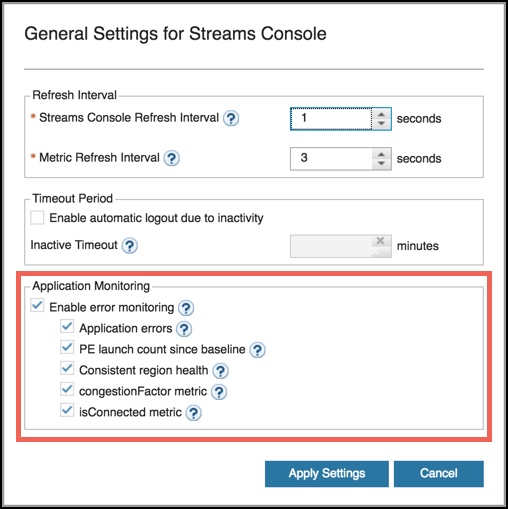

The console displays alerts that have links to the corresponding job, the Log Viewer, and the new Application Errors card. You can specify which kinds of error conditions trigger the alerts in the General Settings for Streams Console, which you access from the clock icon in the upper-right of the screen. You can also access the settings from any pop-up alert.

Example alert

General Settings with Application Monitoring options

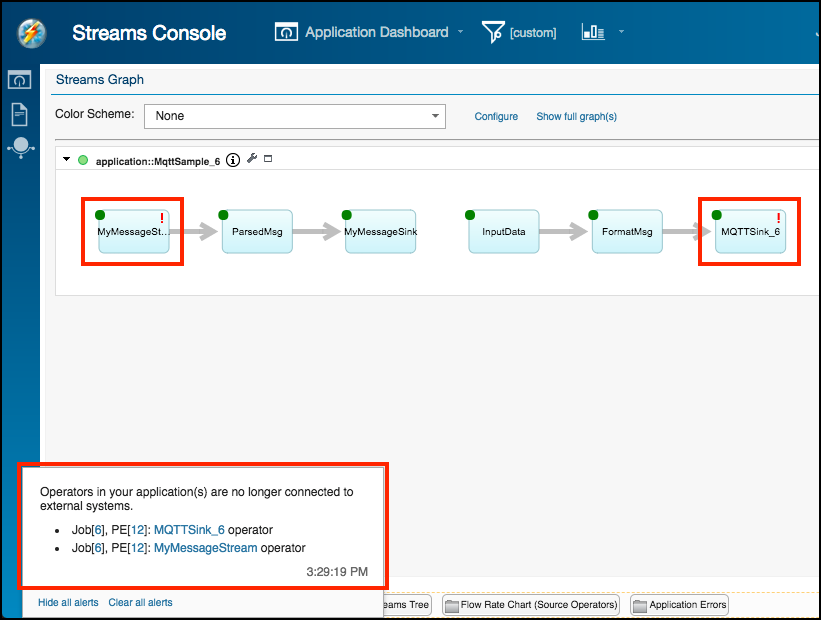

Quickly locate which operators are causing job failures and why

In the Streams graph, a red exclamation point on the operator icon warns you that the operator is causing a job failure. For a Source or a Sink operator, the failure can be due to the loss of an external connection.

Hover your mouse over the operator to view the error message.

Streams graph with alerts for operators

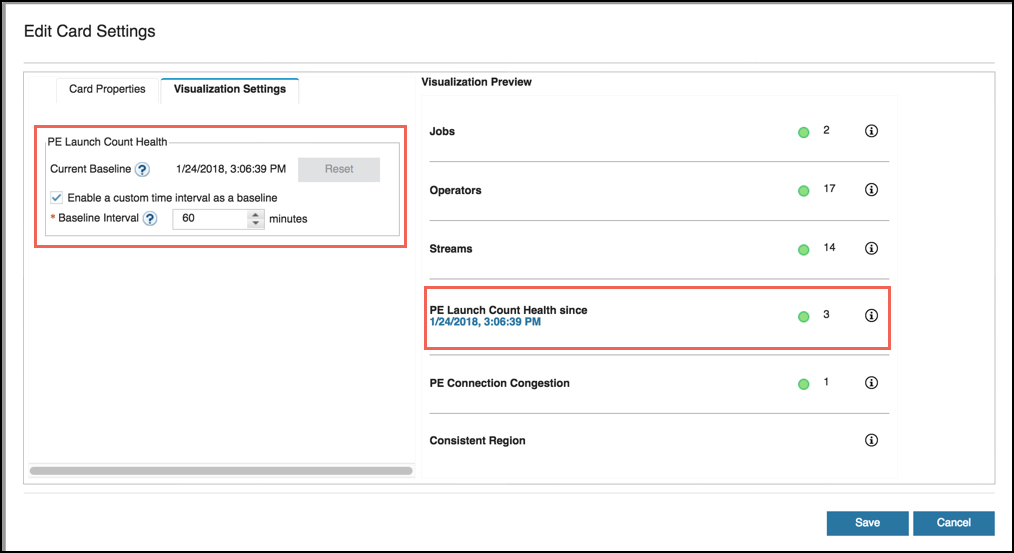

Specify a baseline from which to view PE launch counts

PE launch counts are the number of times that a PE has been restarted due to application failures. Viewing the PE launch counts helps you understand the health of your application. Previously, you could only view the PE launch counts since submission time. Now you can set a baseline and an interval.

To set the baseline and interval, go to the Application Dashboard, select the Summary card. Click Settings (gear icon), and then click the Visualization Settings tab.

Summary card settings for PE launch count baseline and interval

Watch the video

This video summarizes some of these new features. Click the link to watch the video in Watson Media.

High CPU utilization and bottlenecks

To find out which part of your application is processing data slowly and thus contributing to a bottleneck, Streams Console provides the congestionFactor metric.

A related metric is the relativeOperatorCost. This metric is used when multiple operators are fused into the same processing element, or PE.

Relative Operator Cost

When two or more operators are fused into the same process, the relativeOperatorCost helps you determine which operator in a processing element (PE) is using the most resources. The relativeOperatorCost metric is an integer in the range of 0 to 100. An operator with relative cost of 0 has negligible computational cost compared to other operators in the same PE. An operator with relative cost of 100 indicates almost all observed execution time of the PE has been performed by that operator.

The cost metric is obtained by scaling the operator sampling counter to the range from 0 to 100. For example, in an application where only operators opA and opB are in the same PE, the sampling counter for opA is 100 and sampling counter for opB is 900, the cost metric of opA is (100/(100+900))*100 = 10, while the cost metric of opB is 90.

You can see the relativeOperatorCost metric when hovering over an operator in the Streams Graph. In addition, you can apply coloring schemes to easily identify or highlight operators with the highest relative computational cost.

<

This video shows how to use these two metrics to identify bottlenecks and resource hogs.

What is a parallel region? Such a region is defined by adding the@parallelannotation to an SPL operator or calling Stream.parallel () function in the Python API.

Operators within a parallel region are duplicated so that there are multiple copies of the same operator running to improve performance.

See the documentation for more on parallel regions.

See how to do it

Watch this video for a demonstration of how to use these metrics. Click the link to open the video in Watson Media.

Finding Memory Leaks

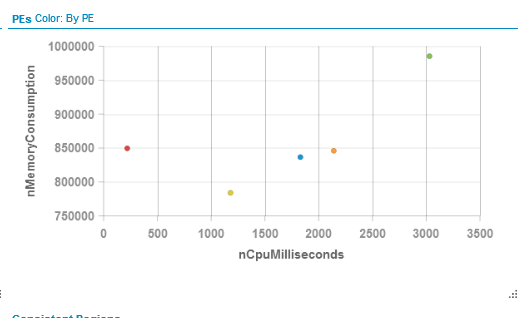

The Streams console has features that make it easier to detect memory leaks in your operators. In this example, I have introduced a memory leak into the MatchRegex operator that allocates a structure on every tuple but never cleans up. This is a pathetic case to illustrate how to use the console to detect memory leak problems.

Below is the PE scatter chart very soon after the job was launched. The green dot in the upper right corner indicates a PE that is using the most memory and the most CPU. This is the MatchRegex operator (hovering on that PE will show the name of the operators within it). At first glance this is fine since that operator is doing most of the analytic processing and it should be using the most memory as it keeps track of potential match sets. The PE is using less than 1MB. (1000000 bytes)

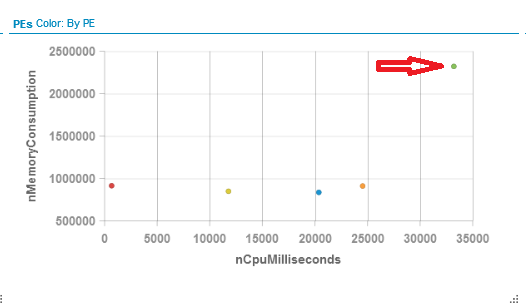

Here is the same graph 30 seconds (approximately) later. As you can see the amount of memory used is trending upward rapidly, almost 2.5MB.

If a Streams operator’s memory usage is constantly increasing as tuple arrives there is likely a memory leak occurring. Slower leaks are harder to detect using the graph over short periods of time but will be noticeable as applications run over night or for a couple of days. When we are creating Streams operators one of the tests we do is to run the operator over night with a steady flow of tuples to determine if the memory usage is increasing.

Other ways to monitor memory usage for leaks:

a) from the scatter graph hover on the element and watch the memory metric. While the hover is open this will update dynamically with every metric refresh cycle.

b) flip the graph over to view the grid and sort by memory. This can then be watched to determined if it is constantly rising.

The basic testing technique being employed is using a Beacon to flood an operator with tuples to check for memory leaks in the operator’s process method. Since the beacon can generate very large volumes of tuples in a short period of time it is an excellent way to detect even small memory leaks.

#CloudPakforDataGroup