Congratulations, you’re about to embark on the journey to unlock your IBM OMEGAMON® data to leverage it within your analytics environment! We hope you find the installation and customization process simple. If you’d like an overview of the IBM® Z OMEGAMON Data Provider (ODP), check out our other blog post

here. The purpose of this blog entry is to provide an overview of the actions necessary to deploy ODP. It is not a replacement to the Installation and User’s Guide. You'll find that

document here after 12 November. Please become familiar with the detailed tasks within that manual.

Find the pre-requisite software

First thing is to make sure you’ve installed the necessary pre-reqs. OMEGAMON Data Provider is not available as a stand-alone offering. It only comes within the two monitoring suites, either IBM Z Monitoring Suite 1.2.1 or IBM Z Service Management Suite 2.1.1, or later versions of each. IBM Z OMEGAMON Integration Monitor 5.6 must be deployed from those suites. There is an APAR/PTF for OMNIBASE required to enable the ODP server: OA62052 / UJ06872. And finally, you need some monitoring agents enabled. At the time of this writing, only the IBM Z OMEGAMON Monitor for z/OS 5.6 is supported to enable its data to be unlocked. Watch this space for the other agents to be added rapidly.

Choose your deployment architecture

There are some architectural deployment choices to make.

- The ODP Data Connect server is a Java program. It can be deployed on z/OS, in zCX or off platform.

- A ZoweTM Zowe cross-memory server must be utilized. If you have Zowe, then a plugin to the existing server is available. Otherwise, the package includes just the Zowe cross-memory server which can be easily deployed, and the plugin can be added to it.

Note: these decisions must be made up front as there are differing installation and customization actions based on those deployment choices.

Configurable components

Overall, there are four parts for the successful deployment and usage of the OMEGAMON Data Provider:

- A data collection task. This “unlocks” Near Term History data and makes it available for sharing.

- The ODP Data Broker which moves the data on z/OS from the collection task to the ODP Data Collect Server which may or may not be on z/OS.

- The ODP Data Collect server which will take the data, transform, and package it for delivery or usage by an analytics server.

The analytics server that operates against the collected data. Examples include Elastic, Splunk, Kafka and Prometheus/Grafana services.

Each of these components needs to be properly deployed for this solution to work end-to-end. Suffice to say, it can be done in less than two hours after the code is installed. Figure 1 shows the first three parts of this environment.

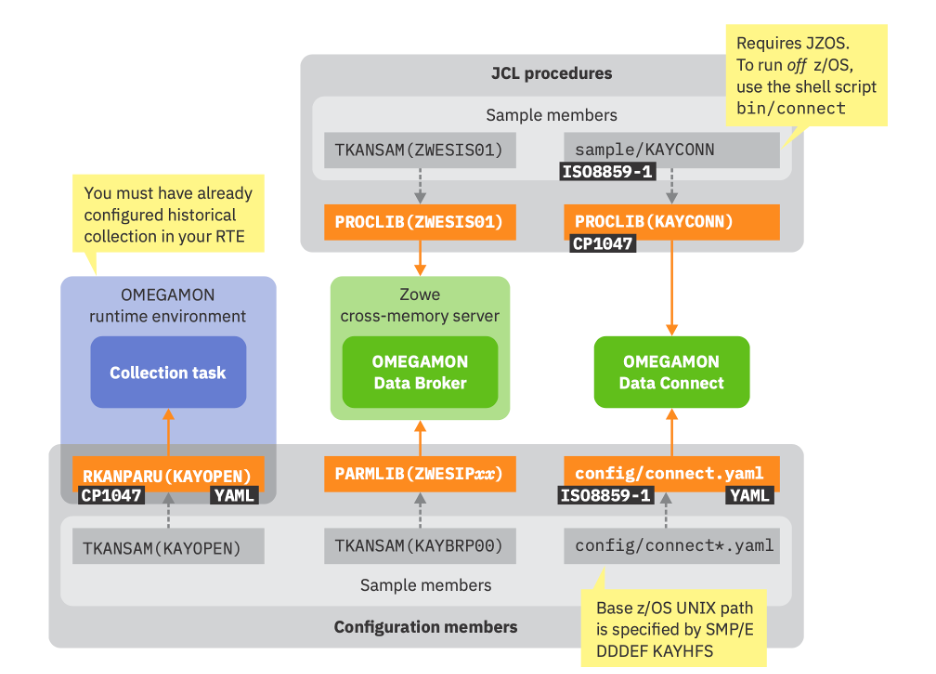

Figure 1: Components requiring configuration

Configuration actions will be based on 1. Run options and 2. Configuration options.

An important note: Because components can run both on and off Z, it’s very important to set your 3270 terminal emulator or favorite editor to the appropriate code pages, which are listed in black in Figure 1. The use of YAML for configuration includes the

characters. These may be represented differently/incorrectly if the wrong code page is utilized during edit functions.

Choose your data from Near Term History

Configuring data collection requires selection of data to be made available for near term history collection. These attribute tables can be identified in the Enhanced 3270 User Interface (e3270UI) or Tivoli Enterprise Portal (TEP) processing. You can leverage the TEP or e3270UI selection of Attribute tables to further filter what data may be shared.

This service includes a YAML file to identify the attribute tables from OMEGAMON agents that will be made available to ODP. It also points to the location of the ODP Data Broker service on this instance of z/OS from which it will send the data.

The ODP Data Broker is a plugin to a Zowe cross-memory server. The important steps here are to either leverage an existing Zowe cross-memory server or use the one included with ODP. If you use the included Zowe cross-memory server, then you’ll need to update PROCLIB, PPT Table, APF authorize it and make it available as a Started Task. If you use a STEPLIB, it needs to be in PDSE format as PDS will not work/concatenate properly.

Next is the configuration and operation of the ODP Data Connect server. Run operations on z/OS depend on the installation of a Java Batch Launcher and Toolkit for z/OS (JZOS) to ensure the code runs on an IBM z Integrated Information Processor (zIIP) . Configuration depends on the connect.yaml file where the input will point back to the ODP Data Broker. For output, there is the choice of:

- TCP – for sending (push) JSON formatted data to Elastic or Splunk

- Kafka – for sending (push) Kafka topics in JSON format to a Kafka data lake server

- Server – which enables the Metrics APIs that can be leveraged by Prometheus to pull data from ODP and display with Grafana servers.

Using the sample dashboards and building your own

The OMEGAMON data is now unlocked and consumable by analytics servers. Each of those servers need to be configured properly to consume the data. The use of a self-describing JSON format helps simplify the configuration for these servers. For example, in the Elastic environment, a simple Logstash configuration file of 18 lines can be leveraged for all ODP server instances. The shipped Elasticsearch index file can allow for simple translation of data. These sample Docker images of the servers, dashboards and sample data can be found on

GitHub.

For use within Splunk, to ingest JSON Lines, you need to define a Splunk source type that breaks each input line into a separate event, identifies the data format as JSON, and recognizes time stamps.

OMEGAMON Data Provider is intended to be highly available and secure.

There are existing best practices for deploying Java servers for high availability. Think in multiples of three.

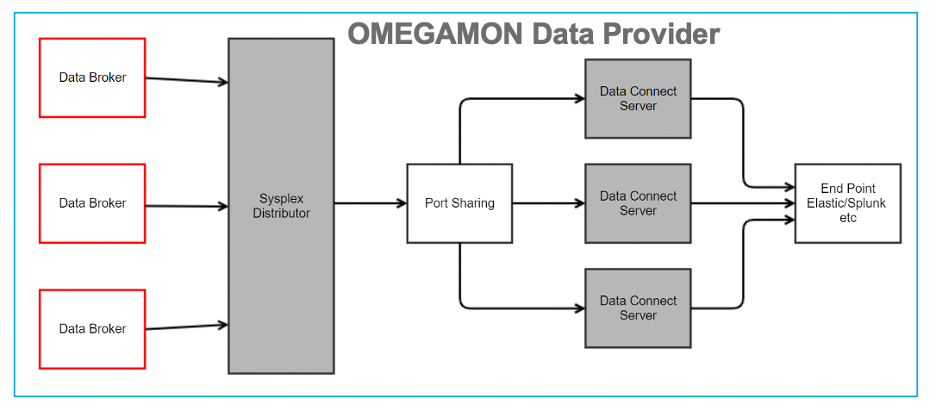

Figure 2: High Availability Deployment for ODP

Use of Sysplex Distributor and Port sharing will enable a high availability topology.

For security, the use of SSL at the various connection points can make the environment more secure. Before adding a security configuration to your environment, run a proof of concept without security to ensure there are no configuration errors in the end-to-end workflow. This will make diagnostics far easier should there be a security configuration error introduced.

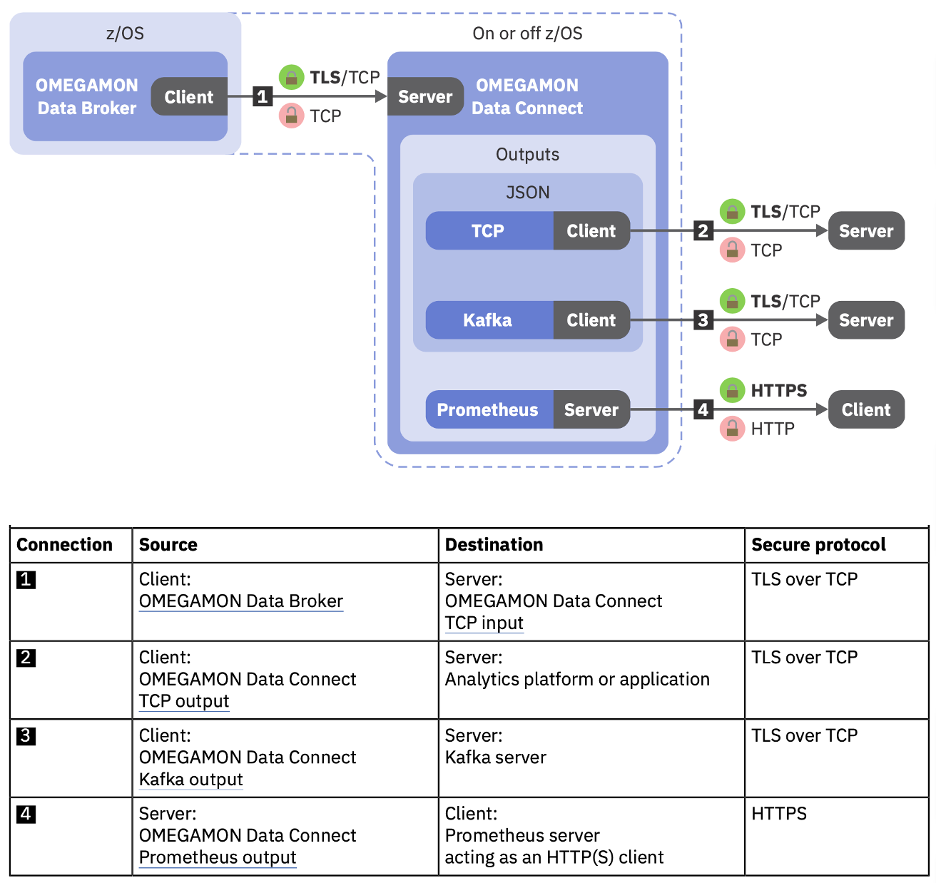

Figure 3: Locations for deploying network security with ODP

It’s important to understand the deployment topology. Network connections that leverage a hipersocket connection within a z/OS instance, a z System server or a Parallel Sysplex may not need additional security. It becomes important to understand the types of data being shared and the risk from insider or outsider access to that data. A private network deployment to the analytic servers may also be good enough to protect the data as is.

Depending on the connection types listed as connections 1 to 4 in Figure 3, there are different configuration settings required. Please see the OMEGAMON Data Provider Installation and User’s Guide for a better explanation of the network security configuration requirements.

Hints and Tips

Lastly a couple of hints and tips so you don’t repeat the mistakes that others have made:

- Don’t be confused with the Zowe cross-memory server. But do remember to add the KAYOPEN plugin.

- Elastic configuration can be very simple. Use the 18-line Logstash file included in GitHub.

- If you run the ODP Data Connect server off z/OS, use the bin/connect.sh script to start it.

- Don’t share volumes across Docker containers for Elastic. This makes migration to updated servers difficult.

When complete, you’ll have the benefit of new Key Performance Indicators coming from OMEGAMON and z/OS available within your choice of analytics servers. While this first deployment may seem different from existing OMEGAMON deployments, suffice to say there will be further changes to reduce the amount of effort to get this rapidly deployed. But even with the current offering, the target of two hours or less should be achievable for your sandbox environment.

#OMEGAMON#AIOPS

#IBMZ